Abstracts

A - B - C - D - E - F - G - H - K - L - M - N - P - R - S - V - W - Y

A

Andean, James ;

Olarte, Alejandro - Electroacoustic Performance Practice in

Interdisciplinary Improvisation

Andean, James ; Olarte, Alejandro - Electroacoustic Performance Practice in Interdisciplinary Improvisation

James Andean ; Alejandro Olarte

Sibelius Academy, University of the Arts Helsinki (Finland)

Technological change over the past decades has seen electroacoustic music move increasingly out of the studios, onto the stage, and from there to broader, more varied, and more flexible performance contexts. This in turn has brought electroacoustic and electronic tools and methodologies into ever more intimate contact and collaboration with the full range of arts practices, from other musical forms, to the other performing arts, and beyond. While none of this is entirely new in and of itself – performance collaboration has been a part of electroacoustic practice since the earliest days of the form – we see today a level of integration that begins to dissolve boundaries between genres and between art forms. As a result, performer roles expand beyond previous limits and borders; practices shift and lines blur; and the notion of electroacoustic performance practice becomes less clearly outlined, dissolving instead into a more fluid pool of performance possibilities, opportunities, and affordances.

These developments pose a number of challenges, as electroacoustic performance practice is reconfigured, renegotiated, and reinterpreted as it evolves and dissolves into these fluid, malleable, and transitioning performance contexts. This paper will examine some of the consequences and issues this implies for electroacoustic performance practice, focusing specifically on the context of interdisciplinary improvisation, as this latter arguably represents a particularly clear case of both a fluid performance situation, and of the dissolution or renegotiation of boundaries between practices and art forms. Supporting references will be drawn from the Helsinki-based Research Group in Interdisciplinary Improvisation, as well as related performance and research groups, including Sound & Motion, the Liikutus project, and the Helsinki Meeting Point. We will also briefly discuss some of the methodological issues and approaches which may be particularly well-suited for research in these areas.

The projects mentioned above involve collaborative improvisation between performers from a range of fields, including sound, music, theatre, performance art, dance, studio arts, and film and video. While there are a number of fascinating research questions involved with such collaboration, we will focus on those which hold most relevance for electroacoustic practice.

The first of these involves the incorporation of technological means and media by a number of the practitioners and performers involved, for example musicians, sound artists, and video artists. How does the inclusion of digital and other technologies affect the ability of individual performers and the group as a whole to achieve ‘now-ness’, to be fully engaged with the moment, as is so critical in improvisation? Many of these tools rely on modes of use which involve forms of analysis and preparation – coding or patching, for instance – which most commonly take place outside of the performance context. Can such acts be made performative, and if so, can they be sufficiently improvisatory? Or are the cognitive modes involved too far removed from those required for full presence and commitment to free and spontaneous improvisation? If, on the other hand, performance aspects of digital tools are prepared in advance, are they still fully suitable to the free improvisation context, or does this degree of preparation beforehand consist of a level of creative planning which is potentially antithetical to the freedom to follow where a freely improvised performance might lead? Are performers who rely on such tools fully able to integrate with others – performance artists, dancers, instrumentalists, studio artists – whose output does not rely on technological resources, or is there a noticeable difference in organic and spontaneous involvement?

Questions more specific to sonic performers involved with interdisciplinary improvisation can also be raised here. For instance, the use of electronics at times results in the potential lack of performance gesture – in the use of a laptop for instance. Is this problematic in communicating with other performers, or across art forms? Sonic performance gesture as a visual cue can be useful on a number of levels, from identifying a given sound source among a group of performers, to offering a preliminary level for engagement. Does its absence prevent the necessary level of fluid communication and interaction?

Perhaps most importantly, what transformations do electroacoustic performance practices undergo as a result of this meeting and melting of artforms? This occurs on two levels: first, how does the electroacoustic performer engage with performers from the other performance arts; second – and perhaps more relevantly – what happens to electroacoustic practice in a context in which the role of a given performer transcends boundaries, passing fluidly from sound, to movement, to more theatrical aspects, or to drawing, painting, or film? Or, more likely, in a performance act which combines aspects of any number of these into a single expression? Does electroacoustic practice dissolve in this multifaceted artistic pool? Or are there core elements which remain firm, and are retained across and throughout this transcendence of historical performance categories?

In tackling such questions in a performance research context, as with any research-oriented work, one must choose the methodological framework best suited for the project at hand, in terms of both research process and desired results. Specific methodological challenges faced here include those generally encountered when the object of research is process, rather than artistic outcomes or artefacts; as well as the acknowledged difficulty of improvisation as an object of research, due to its profoundly ephemeral and transitory nature, and the extremely broad interdisciplinarity which is at the core of the projects, calling for research strategies that take this into account, such as Gibbons’ ‘Mode 2’ research (Gibbons et al. 1994) and Wasser & Bresler’s ‘interpretive zones’ (Wasser & Bresler 1996; Hoel 1999).

For these reasons, among others, projects such as those discussed here tend to turn towards relatively recent methodologies of artistic research, beginning with practice-based research, or, further, practice as research, which perhaps better recognize some of the challenges which are particular to performance research (Barrett & Bolt 2007; Borgdorff 2012; Smith & Dean 2009). Further, these projects employ a methodology drawn from action research, in which the more traditional process of hypothesis – observation – conclusion is replaced by a cyclical model of planning – action – observation – reflection – repeat, or reflect – plan – act – observe – repeat (Carr & Kemmis 1986). A primary difference here is the emphasis on observation and reflection, with a corresponding lack of emphasis on conclusions; this is perhaps appropriate to the context under consideration, as it is difficult to claim definitive conclusions in a performance context which is under constant reinterpretation and renegotiation, as it is arguably impossible to establish an epistemological framework sufficiently stable for the research results to be claimed as definitive, or to be rigorously transferable beyond the confines of a given research context.

Despite such drawbacks, the strength of such research processes –

focused more on finding new or novel questions than answers, and on

opening further avenues for development and exploration than conclusive

determinations – is regularly demonstrated in research areas such as

that being considered here, due to their flexibility, their ability to

remain responsive to multiple disciplines and paradigms simultaneously,

and their ability to approach organic or intuitive artistic processes

as suitable research subjects. As employed here, they offer strong

implications for electroacoustic performance practice as it continues

to reinvent itself in the increasingly mobile, shifting, and

multi-faceted performance contexts with which today’s performers

increasingly find themselves engaged.

B

Bergsland, Andreas;

Tiller, Asbjørn - I am sitting in that room – reverberation,

resonance and expanded meanings in Lucier’s I am sitting in a room

Bergsland, Andreas; Tiller, Asbjørn - I am sitting in that room – reverberation, resonance and expanded meanings in Lucier’s I am sitting in a room

Andreas Bergsland ; Asbjørn

Tiller

Norwegian University of Science and Technology (Norway)

This paper explores meaningful relationships between voice and aural architecture between reverberation and resonance implicit in realizations of Alvin Lucier’s I am sitting in a room, expanding the range of possible meanings beyond the fixed media versions of this electroacoustic classic.

The interior of a building always carries its own sound. It contains what Blesser and Salter term an aural architecture. The aural architecture in this respect is an equivalent to the physical space of the building. That is, its volumes, geometrical construction, and the materials making up the building’s surface. The major influence of the aural architecture of a specific architectural space are the sound sources situated in that particular space, and how the sound from the sources are reflected and diffused by the architecture. In this sense, the aural architecture will also influence our moods and associations in the listening experience.

The aural experience of an interior is closely connected to the term reverberation. Reverberation in the physical sense refers to sound reflections by nearby surfaces, and by this reverberation implies the size and shape of the space as well as the materials of its surfaces.

In addition, the term resonance to a certain degree overlaps reverberation in the sense that it can also imply sound reflection. However, resonance also involves the synchronous vibration of a surrounding space or a neighbouring object.

In this paper, we want to address the concept of resonance not only by the means of a physical definition. Resonance also implies a definition concerning mental imagery; the power or quality of evoking or suggesting images, memories, and emotions, thus referring to allusions, connotations or overtones. In this respect, the term corresponds with the aural architecture, with its influence on our moods and associations in the listening experience.

To address these issues, we have chosen Alvin Lucier’s I am sitting in a room as our case study. By referring to this work it would be possible to explore both how the aural architecture of an interior transform the initial voice to the point of unintelligibility. On the other hand, by suggesting an augmentation of the elements voice and room in this piece, it will be possible to discuss how the work can trigger mental images for the audience. By specifying the story told by the voice, a setting of the work in a specific room, this will guide the mental imagery of the audience on the basis of memory. The perceived space of the work will then be a combination of the composed space and the listening space.

Like several other electroacoustic works from the 60’s and 70’s, I am sitting in a room has had a dual existence since it was composed in 1969. On one side, it has existed and still exists in fixed form as a “tape composition”. In particular, two versions made by Lucier himself in 1970 and 1980 have been distributed on LP and CD, and the literature on electroacoustic music and sound art have frequently made reference to these. For instance, in both Broening’s and LaBelle’s analyses, Lucier’s stutter, the implicit references to these in the text, and the smoothing out of these “irregularities” by the gradual unfolding process are the central issues. Moreover, these more or less canonized recordings, and particularly the latest have been frequently performed, i.e. played back, in acousmatic concerts, and as Collins has noted, thereby made a point of bringing “the public into a private space”, into a room “different from the one you are in now”. Thus, the work seems to exist in a relatively “closed” and authoritative form, where Lucier’s own voice (and stutter), his own text (with reference to the stutter), and the rooms he has chosen (smoothing out the stutter) are crucial components, and which therefore restrict the range of possible meanings associated with the work.

On the other side, however, and perhaps more in later years, the work has existed in the form of live realizations of the written score which provides a set of instructions for the performance and a text passage that can be used. These versions are nevertheless firmly bound to the score, since it explicitly opens up for “any other text of any length”, as well as encouraging more experimental versions using different speakers, different (and multiple) rooms, different microphone placements, and lastly, “versions that can be performed real time”. Hence, the score seems to open up possibilities for a much wider range of realizations, which also naturally would widen the scope of possible meanings for a perceiver. By choosing speakers with a particular story or memory from a certain room, and letting the performance take place in that room, we contend that the work can take on another level of meanings in which, contrary to Lucier, the relationship between the “I” and the “room” is not arbitrary, but highly significant.

In our paper, we would like to exemplify how such a realization can create a heightened emotional and intellectual experience, both for performers and audience, highlighting the room not only as an acoustic environment but as the trigger of memories, imagery and emotions. We discuss a realization of the work in which Håkon, an anthropologist in his early 40s, was taken back to an empty hospital room, where a few decades earlier he had visited his grandfather for the last time before he died. Thus, the room itself was an important factor in bringing back memories of a highly emotionally charged situation, which subsequently amplified the recollection process for him, giving the telling of his story increased actuality and impact. And, as Lucier, he started by verbally locating himself, but Håkon spontaneously rephrased the opening of the text from the score: “I am sitting in that room...”, thereby making the link between his memories and the space explicit.

Subsequently, in the process whereby his story was iteratively played

back in the room, the resonances that gradually built up in the room

created not only a sonically pleasing process, but also a strong

metaphor for the interrelationship between Håkon and the room he was

sitting in, playing on both the physical and the mental meaning of the

concept of resonance, as presented above: The room resonated in Håkon,

triggering mental images of how he experienced the room in the past,

and then, by telling his memory, and letting the sound of himself

telling it progressively excite the physical resonances of the room, he

could follow the room as it “took back” his memory while gradually

transforming it into an aesthetically pleasing object. Thereby, the

process had an element of consolation and catharsis to it, which could

also be felt by those observing the process from the outside. Lastly,

in our presentation we suggest a branch of realizations that might use

this piece both as a form of music therapy, and as a way of creating a

new level of meaning and meaningfulness for audiences observing such a

process, and problematize the degree to which such realizations are

localized in the peripheral zone of the space of realizations that

Lucier’s score delineate.

Blackburn, Manuella;

Nayak, Alok - Performer as sound source: interactions and

mediations in the recording studio and in the field

Blackburn, Manuella; Nayak, Alok - Performer as sound source: interactions and mediations in the recording studio and in the field

Manuella Blackburn ; Alok

Nayak

Liverpool Hope University (England)

In this paper, the author takes particular interest in the collection of sound material from musical instruments (for use in both acousmatic and mixed works) and how the composer manages creative intent and concepts while collaborating with a performer. Interactions at this stage ultimately impact upon the sound material collected as well as the final composition. The frontier for exchange during these composer / performer encounters enables collaborative work to flourish – but what are the optimum conditions for a successful recording session? Is there a requisite limit or a bare minimum on how prescriptive one should be as a composer when directing the performer in order to avoid confining the creative possibilities of one’s own imagination or the performer’s own input? And how does one navigate the same situation cross-culturally with foreign and ethnic instruments where unfamiliar performance practice traditions and language barriers may exist?

It is common to interact with object sound sources (eg. keys, coins, slinky etc...) in an exploratory fashion, prizing out unusual gestures and textures while always on the look out for those happy accidents that might lend themselves well to the transformation process in the studio. With instrumental sound sources, where a performer is involved, the same exploratory activity may not be immediately possible and we must therefore effectively communicate to the performer our request for specific experimentation with sound types and timbres. Approaches to this activity differ from composer to composer and modes of collaboration between composer and performer subsequently change as a result. How we, as composers, conduct this sound capturing process is led ultimately by what we want to work with in the studio. With the use of composer interviews, existing repertoire and previous noteworthy collaborations I am aim to propose, and distinguish between, the following modes of collaboration:

• Instructive / directional: The composer is prescriptive in outlining how and what the performer is to play.

• Explorative / interactive: Details of material remain somewhat unspecified. Some loose ideas and concepts may be discussed beforehand. Contributions from both sides allow a creative exchange to flow.

• Unstructured: An open session where the performer is given free reign / carte blanche to decide what to play. A typical example of this is when a performer demonstrates extended techniques specific to their instrument – the composer acts as a listener and thus learns directly from this process as to what the available sound possibilities are.

Two further distinctive situations are worthy of discussion:

• The composer becomes the performer. The composer experiments with an instrument that they have no formal training on as a means of generating sounds. This also applies to situations where the composer performs or plays with objects (not instruments as such) often in unconventional ways.

• Adapting to source. The composer adapts to a sound source or performer. Onthe-fly field recordings (eg. recording environmental sounds, street performers etc...) where the composer cannot intrude upon or affect the sounding outcome. All adaptations here refer to technical considerations eg. Position, microphone handling and volume control on field recorder).

This paper examines the authority and instructive

role of the composer in the recording studio along with how one might

take ownership of these captured sound materials in future creative

work. Finding oneself within the material generated by others (sounds,

notes, phrases, motifs and even melody lines), especially from

unfamiliar cultures and contexts can be challenging. This part of the

paper draws upon first hand accounts of collaborating with Milapfest

(UK, Indian arts development trust) in building an online sound archive

of Indian musical instruments as part of an ongoing educational

outreach program at Liverpool Hope University. The sound archive

material came to exist as a biproduct of collecting sound material for

my own creative work (two new electroacoustic music works exploring the

use of culturally significant sound material). A significant proportion

of this research project (supported by the Arts and Humanities Research

Council, UK) involves individual recording sessions with approximately

25 - 30 instrumentalists from this highly specialised performance

tradition. This raises important issues regarding cross-cultural

exchange and what, as an electroacoustic music composer, I might

achieve sonically from exploring their practice, along with the

question of how and what the performers take away from these

encounters. Within the ‘give and take’ of a cross-cultural

collaboration, I am posing the question of how possible it is to exert

one’s creative and personal compositional voice when considering each

different mode of collaboration. As creative projects evolve, take

shape and are eventually performed, how is the performer’s reception of

the final work informed by the early stage collaboration between

composer and performer? The collection of both idiomatic and

unconventional sound materials provides a discussion point within this

discourse, which will be supported by personal perspectives and those

from performers involved in this collaborative process.

Böhme–Mehner,Tatjana

- From Parallel Societies to a Global Network: A historiographical

approach to the relationship of musicology and electroacoustic music

Böhme–Mehner,Tatjana - From Parallel Societies to a Global Network: A historiographical approach to the relationship of musicology and electroacoustic music

Tatjana Böhme–Mehner

Martin Luther Universität of Halle-Wittenberg (Germany)

Did composer X know the work of Y when he wrote his

very important piece Z? Was inventor P influenced by the discovery of V

when he developed his procedure of sound generation? Did sound artist W

know the special conditions of festival R, where his piece S was first

performed, and did he know about the context of sound installations

presented there? Questions like this are quite usual in the arts and in

musicology, inasmuch as the latter discipline is interested in social

matters at all.

The phenomenon that comparable things develop in the same time under

more or less comparable conditions has always interested the arts from

the perspective of dependence and independence. Nevertheless network

theory in the narrow sense has not so far been seriously employed for

the discussion of the appearance of artistic inventions.

Musicologists usually love to realize relations and interactions – often much more than simple parallels. In this age of social networks it often appears unimaginable that something could develop without another’s knowledge, that it is possible to do something on one side of the ocean that is not noticed on the other side, that there was a time – not long ago –, in which real spatial distances where as absolute in matters of time. And – marking by that also somehow economic differences – these distances could be interpreted as social ones and by that in another way as cultural distances as well, although the internal structure of developing subcultures or even parallel societies at different ends of the world is quite similar, if the conditions are comparable.

When we in our today’s researches in the arts raise or are confronted with questions such as whether and what Pierre Schaeffer for example knew about the history of “Hörspiel” in the 1920s in Germany or how close were the contacts of Werner Meyer-Eppler with the researchers in the USA or which sounds really got through the “Iron Curtain” from Western to Eastern Europe and back, or other questions with an hypothetic character like that, we have to reflect the real as well as the potential relations. It is not only the manifest contacts or the library of this or that musician and so on which has to be taken into account when we are looking for relations and interpenetrations, but also the possibility of communication, the existence and potential of obvious or latent networks. Every researcher makes assumptions against a backdrop of communicative experiences. As everybody knows, this is a latent danger facing every researcher in the arts and humanities. Working with young students we always have to ask questions such as: how did communication happen in a world in which self-promotion via Facebook or YouTube was not yet possible? how were the results of communication stored and kept accessible without the internet? The internet never forgets, as we know, but people do forget, and soon. Thus, how do we manage within our research approaches the resulting differences of archiving and storing in a broader sense?

This background of experience may be a problem reflected in every discipline, but within the study of electroacoustic music it could have an important and interesting function.

This talk is about the development of network structures within electroacoustic music and its study from a historiographical perspective. It takes as a departure point the idea that when people first became interested in using electricity for the production and reproduction of a new and modern kind of music, what developed can be in terms of social theories be seen as a certain kind of parallel society. This parallel society from its beginning developed a structure, which from today’s perspective can be seen as a network in the narrow sense defined by network theory. The reasons for that can be found as well in the differences between what was first called electric and later electroacoustic or electronic music and the social world of traditional music, and primarily in the new and multilayered demands of this music of people involved on all sides of the music market.

The positioning between the disciplines – music, communication and media sciences, engineering and so on –, between the markets –classical music, radio art, sound art, technologies and others – and thus between their audiences developed a structure which could be understood and analysed with the help of network theory long before this approach became important within the social and communication sciences.

Thus, we have to distinguish between the network as a social theoretical approach, the network as a technological term and networking moments as parts of practical social life structure.

In our approach the first and the last of these three are of special interest – but in order to compare possible perspectives we introduce the idea of parallel societies to provoke a kind of dialectic discussion.

First of all we tell the history of electroacoustic music interlinked as this of the development of a network or networks and that of very strong parallel societies. Thus, it is first of all a discussion of the appropriateness of social theories or approaches for dealing sociologically with electroacoustic music.

For that purpose we go quit far back in history and use the contemporary situation of a nearly global networking society for comparison, we consider the social structures of electroacoustics from their beginnings in early radio to the point when computer music fostered the development of another social sphere. Thus, the networks we are talking about developed first of all around the big traditional studios.

Getting back on another level to the position of the researcher in the era of social networks, it will be asked at the end of the talk how electroacoustic studies can profit from the analysis of the network character on the one or that of a parallel society on the other hand, how they are part of that, and how this can be reflected.

Although today nearly all information is available to everyone, we

often do not know what our neighbour is doing concerning the same

problems we are dealing with, because s/he may be interlinked with

other networks: Thus, at the end in a provoking manner it is shown, how

networks can quickly take over the classical risk of parallel cultures.

Bonardi, Alain ;

Dufeu, Frédéric - Comment modéliser les comportements des

instruments numériques? Propositions en logique floue à partir des

œuvres de Philippe Manoury

Bonardi, Alain ; Dufeu, Frédéric - Comment modéliser les comportements des instruments numériques? Propositions en logique floue à partir des œuvres de Philippe Manoury

Alain Bonardi ; Frédéric Dufeu

Université Paris 8 / Ircam (France) ; University of Huddersfield, (England)

Pour le musicologue, l’environnement informatique destiné à l’interprétation d’une œuvre mixte interactive peut constituer un important objet d’analyse. Une attention apportée directement au programme sur lequel repose l’exécution permet en effet de relever sans ambiguïté les conséquences des informations d’entrée, dérivées du jeu instrumental ou vocal, sur les processus musicaux et sonores. L’enjeu d’une telle démarche, d’ordre organologique, est d’évaluer l’étendue expressive de l’instrument numérique et, à travers elle, le potentiel d’interprétation de l’œuvre musicale. Dans les cas où les programmes informatiques sont disponibles et encore exploitables, leur haut degré de personnalisation apporte une difficulté importante à l’examen musicologique d’un ensemble de cas : d’une composition à une autre, les unités de traitement musical ou sonore et leurs interconnections sont variables tant par leur nature que par leur implémentation. Bien que les comportements instrumentaux rencontrés à travers le répertoire des musiques mixtes présentent un certain nombre de traits communs, chaque étude est susceptible d’appeler une approche entièrement renouvelée, sans bénéfice significatif tiré d’expériences précédentes.

L’objectif de notre communication est de présenter des perspectives de modélisation pour le comportement des instruments de musique numériques. Nous nous appuierons sur une expérimentation faisant appel aux formalismes de la logique floue dans la section III de Pluton de Philippe Manoury. Nous utilisons la librairie FuzzyLib développée par Alain Bonardi et Isis Truck dans l’environnement Max / MSP, de ce fait directement connectable au patch de Pluton. Dans l’esprit du Computing with Words décrit par Lotfi Zadeh, ce formalisme nous permet de décrire les phénomènes en entrée et en sortie des modules de transformation de manière sémantique, revenant de la mesure physique à une appréciation relevant de la perception ; il est également possible de raisonner à ce niveau sémantique par des inférences. En logique floue, il est ainsi possible de travailler sur le vocabulaire musical de la dynamique et des tempi (pianissimo, moderato, etc.) de manière non binaire plutôt que sur des plages exclusives de valeurs numériques, et de décrire les fonctions de transfert par des règles floues.

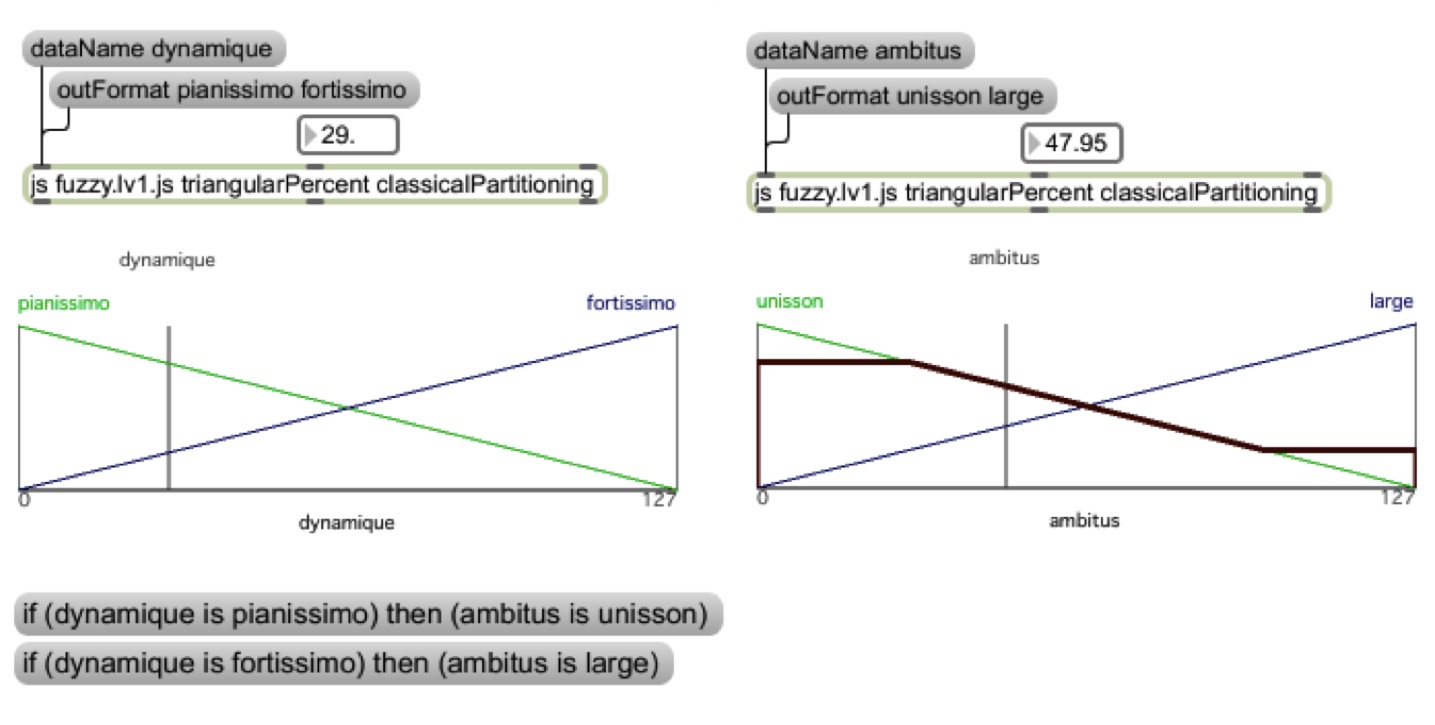

L’exemple ci-dessous montre comment deux variables linguistiques appelées dynamique et ambitus ont été modélisées dans la section III de Pluton de Manoury. Elles possèdent chacune deux classes, associées à des sous-ensembles flous, matérialisés sur le schéma par les fonctions ascendantes et descendantes correspondant à un degré de vérité ; la première s’appuie sur les notions de pianissimo et fortissimo ; la seconde sur unisson et large. Deux règles floues ont été posées, reliant les deux variables :

• Si dynamique est pianissimo,

alors ambitus est unisson

• Si dynamique est fortissimo,

alors ambitus est large

Un moteur d’inférences fondé sur le principe du general modus ponens met en œuvre le raisonnement et permet de déduire l’ambitus en fonction de la valeur en entrée de la dynamique.

Plus généralement, lorsqu’une variable comporte cinq classes (par exemple pour la dynamique piano, mezzo piano, mezzo forte, forte, fortissimo), un algorithme a été créé par Isis Truck pour façonner et répartir automatiquement les sous-ensembles flous à partir de données expérimentales directement récupérées du patch étudié.

Cette approche permet tout à la fois de modéliser le contrôle et donc

le comportement du patch selon des termes liés à la perception, voire

même de le remplacer ou de contribuer à sa pérennisation, étant une

forme d’écriture abstraite d’une implémentation liée à un logiciel. À

partir d’un cas particulier d’interaction homme-machine présent dans

une œuvre emblématique du recours au temps réel, nous discuterons la

pertinence de cette modélisation pour l’analyse des musiques mixtes et

ses perspectives de développements pour l’application de formalismes

généralisables à une large part des instruments numériques du

répertoire contemporain. Cette démarche constitue une contribution à

l’organologie des dispositifs temps réel de production sonore.

Bossis, Bruno - Behavioral interactions in the context of Electroacoustic Music

Bruno Bossis

Université Rennes 2 / MINT-OMF Université Paris-Sorbonne (France)

When the user can control a machine based on what he perceives from this machine, a loop is created. From this definition, an acoustic musical instrument could be considered as interactive. However, in this case, the behavior of the machine is determined by its initial and physical construction, and the machine doesn’t actually interact with musician. The instrumentalist’s action is a gesture and he listens to the resulting sound. Thus, the loop is asymmetrical. The instrument is a device that doesn’t act by itself.

On the contrary, and we will insist on this difference and other particularities in order to introduce new developments in musicology, an electroacoustic device can act as an operative machine by itself. Its response to a request by the musician depends not only on the instrumentalist’s gesture and on its mechanical construction; its answer also depends on how it has been programmed by the composer or his assistant. An electroacoustic device involves real interaction because, for a given event, the electronics can be designed with a specific structure and a specific behavior. It is “composable” in the same meaning that we say a score is composed. In the interactive exchange, the answer depends on the question received and on the rules determined by the will of the composer. These rules define a complex behavior which poses new problems for musicological analysis. Never before, this sort of loop was involved in music.

Moreover, the structure of the electroacoustic device network and its

behavior is defined dynamically during the piece. They are often

constructed in the shape of networks of modules, changing during the

piece, exchanging information flows, and they can be partly random and

partly a learning process. Interactivity can be stable and balanced at

a given time, but it evolves along the temporal axes of music being

composed or being played. It may also be endowed with memory and its

reaction may be shifted in time. That is to say that it may take

account of past control values or past sounds. Since the early modular

synthesizers, the principle of modular network, already mechanically

implemented in the church organ, takes another dimension. This time, a

function representing a sound wave can interact with another function

of the same type. Sound can modulate another sound, and all kinds of

combinations are theoretically possible. This process is utterly

different. When technology became digital, sequential behaviors with

all sorts of various serial and parallel branches became implementable,

e.g. with a qList. And a network of modules can work by leaning on

another temporal structure. All forms of manipulations are possible,

including asynchronous structures using memory of what happened before.

The principle of the network can be local, as in a device comprising

several modules. At first sight, it seems to be the oldest stage in the

evolution of complex electroacoustic devices. However, the first

examples of concerts involving multiple sites in one way or another

began to appear when Telharmonium sounds were conveyed by the telephone

network, and then when tape recorders turned out to be too heavy to be

carried in the concert hall at the time of Schaeffer. More recently, a

complex patch in PureData or in Max / MSP can be conceived and

understood as a network of local modules. It can also be spread as a

computing power distributed over several computers or as modules

communicating through MIDI, UDP, or IP based networks, in one or

several locations. But still, the notion of behavior and operative

rules underlies the functioning of the whole.

From various examples, my reflection will initially involve a typology of behavioral interactions. This part will consist of a systematic attempt to classify the corpus of musical works. From this notion of behavior, it will construct new methodological approaches to develop an understanding of the most general possible sort of musicology and analysis of electroacoustic music in the context of an interactive approach and networks.

The concept of behavior is deterministic in itself. For example, a sound can be generated by a system from an action on a human / machine interface. The result is determined not only by the action of the player, but also by the behavior of the whole system. If it was programmed differently, the same action of the interpreter could trigger another transformation of timbre, a reverberation, a chord progression, a melody, a rhythm, an automatic monitoring or any type of event. But each time, the instrument response is controlled and depends on the wishes of the composer. More specifically, this response is inseparable from music creation, writing, style and aesthetics. It needs to “write” the instrumental response to the demands of the interpreter at any given time of the work. Or this reaction may take place moments or minutes later. Moreover, nothing prevents the composer from introducing a random part into this behavior or from using former events becoming from the same interpretation or even from former concerts. Examples will be proposed.

In summary, the concept of behavior into a coherent system itself can be precisely defined in nine statements:

1. A behavior has an identity by itself and a single one.

2. At any given moment, there is only one behavior.

3. Two different behaviors are necessarily differentiated by at least

one different characteristic.

4. A behavior can vary over time gradually or discontinuously

(successive stable states).

5. A behavior can include data belonging to previous events.

6. A behavior can be constrained. For example, a sound generation may

not exceed a certain energy level in a frequency band.

7. The characteristics and constraints can be more or less random, but

the opening of behavior always remains controlled in one way or another.

8. Behaviors can be interconnected and depend on each other. Thus, for

instance, reverberation will apply only if a resulting value from a

first process exceeds a certain threshold.

9. There may be behaviors of behaviors (meta behaviors), hence a

hierarchical structure of behavior. Overall behavior is then the

combination of a set of behavior units.

As a consequence, in such a context, the analytical approach must be of a relational type. Relationship is a set of attributes that define a fact. Different types of relationships can be distinguished. For example those based on:

1. gestures in the human / machine interface;

2. time related processes (cues, synchronous interpolations);

3. triggering (automatic tracking partition for example);

4. flow and events related to the signal / controls;

5. electronic functions: recording, sampling, synthesizing, effects,

score following process;

6. musical functions: intervals and melody, chords and harmony

evolution, time structures and rhythm.

These categories are neither exhaustive nor exclusive. More generally, all of the above reflections show the magnitude of the task facing the composer, the interpreter, the musicologist and the analyst. We will try to propose some generalizing models in order to better understand what are the invariants of electroacoustic music in the context of networks and interactivity. In order to be rigorous and precise, the musicological approach must be renewed on the basis of exact definitions and typologies. The main goal is to model processes in electroacoustic music, i.e. to find general structures for better understanding this music.

Bullock, Michael T. - Feeling-oneself-feel: Alvin Lucier, alive in place and time

Michael T. Bullock

(USA)

This paper will examine several pieces by American composer Alvin Lucier, which depend on the contingencies of the performative body in a particular place during a particular duration. The composer turns his own body’s interaction with a space and with itself into both generative processes and musical material. The main focus of this paper will be Music for Solo Performer: For Enormously Amplified Brain Waves and Percussion (1966). Electrodes are attached to a performer’s head to pick up low-frequency brainwaves and transform them into sound waves emanating from loudspeakers. The movements of the loudspeakers are used activate percussion instruments. I will investigate this piece’s relation to two later pieces by Lucier, Clocker (1978 / 88) and Bird and Person Dyning (1975). All three pieces are created live using a metastable feedback system: the performer’s body in a particular room at a particular time, with an audience, using electronics to transform certain aspects of the performer’s physical presence into music.

Rather than engaging in scientific investigation, in these pieces Lucier uses physical principles and mechanical processes to create for each of these pieces a conceptual heart that lives past their realization. The act of performance, and the life of the performer, overlaps with the audience’s experience. The result is an observation of oneself as being alive in space and time, what Jean- Luc Nancy calls “feeling-oneself-feel.”

Music for Solo Performer (1966)

While brainwaves are the driving force in Music for Solo Performer, they do not act as control information but rather are treated as audio waves, albeit at frequencies lower than the human ear can hear. The waves are amplified through electronics and sent to loudspeakers capable of reproducing subaudible frequencies. The loudspeakers are attached to various percussion instruments, and the mechanical action of the loudspeakers vibrates the instruments. In this way Lucier avoids altering the waves in any way aside from amplification. Nonetheless, Lucier is careful to point out in the title that the waves are ‘enormously amplified,’ reminding the listener that there is a level of mediation between the brain’s activity and the final sounds. The waves are not modulated or decoded, simply amplified, but because they are treated like lowintensity sound waves in need of amplification, they are stripped of any speculative associations with mind control.

The performer is directed to become impassive and reduce or remove all visual stimuli, either by closing or unfocusing his or her eyes. In this way, the performer named in the title is practically un-performing, and even the word ‘solo’ comes into question as the performer’s brain waves make changes outside of his or her conscious will, changing which may come as a surprise to the performer.

For this paper I will briefly compare several performances of this piece: by Lucier, by Pauline Oliveros, and by John Mallia and Neal Markowski.

Bird and Person Dyning (1975) and Clocker (1978 / 88)

In Bird and Person Dyning, the performer’s entire body becomes the metastable element. Wearing in-ear microphones, the performer walks around a room containing several loudspeakers and an electronic birdcall. The microphones are connected to the speakers and generate feedback. The feedback changes gradually and constantly, depending on the performer’s position as well as the positions of audience members’ bodies. In Clocker, the ticking of a mechanical clock is sent via contact microphone through a digital delay; the speed of the delay is controlled by the galvanic skin response of performer’s fingers at rest. The performer in these pieces is using electronics and physical objects (the birdcall, the clock) to mediate the interaction between his body and the room, and thereby create musical content that expresses that relationship abstractly and in real time.

Recent work with brainwaves

I will close the paper by returning the focus to the use of brainwaves in performance. I will discuss several projects of American composer and performer Alex Chechile, who uses digitizes brainwaves in live performance, in combination with more traditional performance activities. Chechile has used his own brainwaves as well as those of collaborators, including Pauline Oliveros and this author. His use of brainwaves is distinct from Lucier, in that Chechile transforms them into control signals via software, which can in turn be used to change the parameters of a filter or any other sound processor, or to make selections from a database of written music fragments to generate a score on the fly for the performer to read.

Regardless, the central conceptual heart is similar: a metastable

characteristic of the performer’s physical being, changing over time,

determines the unfolding of the piece. The most important difference

between the Lucier pieces and these more recent works is that the

performer who wears the electrodes is also performing an instrument,

and is thereby faced with a new version of “feeling-oneself-feel”: the

unusual but oddly familiar sensation of making musical decisions while

simultaneously witnessing your brain make similar – or different –

decisions.

C

Catanzaro, Tatiana

Olivieri - Le technomorphisme au Brésil entre les décennies

1960-1970 À travers l’œuvre de Gilberto Mendes

Catanzaro, Tatiana Olivieri - Le technomorphisme au Brésil entre les décennies 1960-1970 À travers l’œuvre de Gilberto Mendes

Tatiana Olivieri Catanzaro

Université de Paris – Sorbonne (France)

Contrairement à l’évolution dans d’autres pays sud-américains qui ont eu d’importants centres de recherche et de composition électroacoustique, le manque d’intérêt porté par les écoles de musique et la radio brésilienne à la musique électroacoustique a fini par restreindre, jusqu’à environ trois décennies, l’action des compositeurs aux « conditions précaires de studios privés, formés par l’assemblage d’appareils qui répondaient mal aux manipulations le plus simples, telles que la lecture des sons à l’envers ou les variations de vitesse (Neves, 1981, p. 188). »

Jusqu’au début des années 1980, l’histoire de la musique électroacoustique dans le pays se voit limitée à des tentatives isolées, dont la première peut être considérée le départ du compositeur Reginaldo de Carvalho à Paris, encore dans les années 1950. En France, Carvalho étudie avec Paul Le Flem et Messiaen et peut participer des expérimentations dans le domaine de la musique concrète développées dans l’ancien Centre Bourdan sous la direction de Pierre Schaeffer. De retour au Brésil, Carvalho reste pendant des nombreuses années comme un pionnier solitaire, et c’est seulement en 1963 qu’un autre compositeur, Gilberto Mendes, récemment rentré du Festival de Darmstadt, en Allemagne, s’intéresse également à la musique électroacoustique. Son œuvre Nascemorre, pour chœur et bande magnétique, composée peu après son retour, devient donc la preuve de cet intérêt chez le compositeur.

Cependant, c’est Carvalho qui lutte pour que se crée un centre de

musique électroacoustique au Brésil, formant une unité de recherche

afin d’obtenir la création d’un studio institutionnel, fondant ainsi le

Département de Musique et Électronique à l’Université de Brasilia et la

Radio Educadora. Pourtant, c’est seulement en 1966, lorsqu’il est nommé

Directeur du Conservatoire National de Chant Orphéonique à Rio de

Janeiro (plus tard l’Institut Villa-Lobos), que le courant brésilien de

musique électroacoustique peut enfin se développer. Neves déclare : «

Au cours de la période pendant laquelle Reginaldo de Carvalho a dirigé

l’Institut Villa-Lobos, il y a eu dans cette institution le

rassemblement de tous les jeunes compositeurs intéressés par la

recherche musicale, sans pouvoir pour autant mener leurs travaux à

terme par faute de soutien financier pour l’installation de vrais

studios de recherche ».

De ce fait, malgré les problèmes d’ordre monétaire vécus par

l’Institut, il permet l’essor d’une importante recherche

compositionnelle dans ce pays. C’est là, par exemple, que le

compositeur Jorge Antunes, en rejoignant l’équipe de professeurs de

l’institution en 1967, trouve l’espace pour développer ses recherches,

inaugurant le laboratoire Art Intégral et le Centre de recherches

Chrome-musicales. Avec l’entrée d’Antunes dans cet Institut, on

témoigne la représentation brésilienne des deux principaux courants

européennes : la musique concrète, centrée sur la figure de Carvalho,

et la musique électronique, centrée sur celle d’Antunes. En fait, c’est

à lui que l’on doit les premières pièces purement électroniques créées

au Brésil: la Pequena Peça para Mi

Bequadro e Harmônicos (1961); et la

Valsa Sideral (1962).

D’autres compositeurs travaillent également pour

l’institutionnalisation de la musique électroacoustique au Brésil,

comme Jocy de Oliveira et Conrado Silva. De toute façon, nous notons

que peu de compositeurs arrivent à travailler la musique

électroacoustique de façon systématique dans le pays. Cela est dû

probablement au fait que presque tous les compositeurs qui se sont

intéressés à l’environnement de la musique électroacoustique et qui ont

pu apprendre le métier dans des studios étrangers on dû, lors de leurs

retours au Brésil, faire face à la réalité de ne pas trouver de soutien

institutionnel adéquat pour que la recherche et la composition

électroacoustique puisse être développée sérieusement. Dans ce

scénario, la plupart des compositeurs ont soit quitté le pays soit

menés à abandonner la composition pour ce milieu. Ceci, allié à une

position traditionaliste qui a prévalu dans le domaine de l’éducation

musicale, ont empêché l’expansion de la production électroacoustique.

La plupart de son histoire a été donc soumise, d’une part, au travail

isolé de compositeurs qui ont apporté l’expertise et l’équipement de

l’étranger et, d’autre part, aux courants créés par des groupes de

compositeurs et d’interprètes de façon rare, discontinue et sans le

soutien gouvernemental.

Malgré le retard qui a souffert l’exploration de la musique

électroacoustique au Brésil, l’esthétique de la musique contemporaine

brésilienne entre les décennies 1950 et 1970 a été grandement affectée

par les tendances de la musique électroacoustique européenne. Cela est

dû à deux phénomènes spécifiques : d’un côté, l’existence de

compositeurs étrangers qui se sont installés au Brésil et, de l’autre,

des compositeurs brésiliens qui ont étudié ou qui ont participé à des

festivals à l’étranger, et qui ont par conséquent vécu et été

influencés par ces questions et les ont transplantés dans le pays.

Dans le but de faire ressortir cette expérience musicale, j’ai réalisé

un entretien en 2002 avec le compositeur Gilberto Mendes (Catanzaro,

2003), compositeur qui a vécu ces événements de façon intense et que,

comme tant d’autres, en régressant à la patrie, s’est retrouvé sans

aucune infrastructure afin de pouvoir développer un travail technique

adéquat dans ce domaine artistique. Muni d’appareils sans aucune

sophistication, tels que des magnétophones ordinaires comptant avec

trois vitesses, le compositeur a composé un nombre important de pièces,

en particulier pour le théâtre, ce qui a mérité un mention dans le

livre de Schaeffer où il est classé comme l’un des pionniers de la

musique concrète au Brésil.

J’ai essayé de capture, de cette façon, non seulement la signification individuelle de cette expérience imprimée sur le compositeur, mais les impressions générales qu’il porte sur la signification qui a eu cette époque pour toute une génération qui s’est réuni sous les mêmes impasses et possibilités d’exploration esthétique.

Je propose, donc, l’analyse de trois pièces écrites par Mendes pour

chœur afin de démontrer l’influence que la musique électroacoustique a

eu sur la musique vocale et instrumentale au Brésil. Nous avons choisi,

pour cette étude, trois pièces de Mendes, une écrite dans un style

expérimental, qui a incorporé le technomorphisme de façon plus ouverte

(Nascemorre, 1963); une moins expérimentale, mais encore dans la lignée

de l’avant-garde (Motet em Ré menor

[Beba Coca-Col], 1967) ; et une qui

présente une approche plus traditionnelle (Com Som, Sem Som, 1978). Ces

pièces obéissent également à un ordre chronologique, afin que nous

puissions démontrer comment et dans quelle mesure le technomorphisme a

été introjecté dans sa technique de composition.

Chittum, John - Delving Through Artifacts - Finding the Score and its Meaning in Interactive Works

John Chittum

University of Missouri-Kansas (USA)

The notation systems used in contemporary art music can cause confusion and raise questions about how to analyze the music. Graphic notation, mathematically driven composition systems, Fluxus works, and aleatory and improvisation have changed the meaning of the score in relation to analysis. In electronic works, music is often performed or created without a traditional score. Still prized by many theorists and musicologists, there is no denying that this valuable artifact has had its primacy as an aid for analysis weakened. In regards to works with performers, the question becomes “What is the score, and what does it tell us?” This presentation looks at two works, Aphorisms on Futurism by Andrew Seager Cole, and The Machine the Sneetches Built by John Chittum and Bobby Zokaites. These two pieces offer a cross section of interactivity – Aphonrisms being triggered playback files and live signal processing, while Sneetches is an interactive installation using Wiimote controllers, Max/MSP, video games, and a kinetic sculpture. This presentation will look at what constitutes “the score” is in these works, and what the score conveys in these two pieces.

In my previous research, I took a broad view of approaches to analyzing interactive multimedia. The study, originally presented at EMS 2012 in Stockholm, brought in perspectives from researchers and musicians including John Croft, Dennis Smalley, Barry Truax, and Gunther Schuller. The paper also created a taxonomy of interactive media, and offered a methodology for approaching the analysis of interactive works. The proposed methodology is little more than a framework, and demands more extensive work in specific areas. This presentation is a follow-up on one of those areas of concern.

Before delving into specific works and the use of the score in analysis, a definition of a score and its content must be provided. A traditional score describes the basic elements of music: pitch, rhythm, meter, harmony, dynamics, form, texture, and timbre. Symbols (the notation) have specific meaning, and musical structures are developed around these meanings. This allows a researcher to use the score as the primary means of analysis, since all information needed for the performance of the piece is contained on the piece of paper. This kind of score is of course connected most obviously to music written during the “Common Practice Era,” encompassing West art music dating from the 17th century to ca. 1925.

In electronic music, the lexicon and grammar of music is defeated by the inclusion of “extra-musical” sounds that defy standard notation. These extra-musical sounds are often developed in ways other than pitch, making the pitch-centric style of notation in Western art music insufficient to provide their meaning. Because of this, scores that include mixed forces –live musicians with electronics, or interactive pieces – often utilize graphic representations of sound, text, and other non-traditional elements. These can include waveform diagrams, abstract drawings, or specialized symbols. These types of notations carry their own lexicon and syntax providing similar information as the notation from the Common Practice Era, but removing the original associations of standard notation. In particular, texture and dynamics are shown effectively in graphic representations, while other facets, such as pitch and timbre, are often displayed, respectively, as standard notation and text.

The purpose of the score is to act as a set of guidelines for performance, a clear set of instructions that give the majority of information needed to perform the work. The more the score holds to Common Practice Era notation and standard instrumentations, the more precisely the notation can be realized. In theory research, the score is the “Holy Grail” of the music, the everlasting form for the ephemeral art that occurs on stage. For works utilizing improvisation, aleatory, graphic representations of electronics, or few electronic notations, the score transitions from an all encompassing framework to a sketch which provides only a general sense of direction. In works of mixed forces, traditionally notated instruments and non-traditionally notated (or un-notated) electronics, the score acts as a point of synchronization and loose directions on what may occur and how one may interact with the electronics. Within these scores, traditional and non-traditional notations are displayed side by side. Neither notation by itself, nor the combination of notations can fully portray what is happening musically. In interactive electronic works, the insufficiency of the notation to accurately portray the music is the most apparent. A text description of the effect does not portray all the information regarding the end result.

In musical analysis, other artifacts beyond the score are seen as secondary sources. These artifacts include recordings, research papers, and presentations. However, in the case of some electronic pieces, the only artifact is a recording. In interactive pieces, a recording only captures a single possible performance, which leaves many possibilities undiscovered. By using published papers and presentations, a researcher can fill the gap.

In works that require some form of interactivity, researchers also have another artifact available – the program. Any piece that includes real-time processes will require some sort of hardware or software to perform the function. This gives the researcher full access to the electronics, be it single playback files, synthesis, live DSP, or any other function that may be occurring. For some processes, this gives the researched the “if-then” statements which guides the actions of the program. In the case of a work with mixed forces, such as Aphorisms, this can be a simple trigger –“If I push the space bar, this audio file plays and these processes are done to the voice.” For complex interactions, it can define all of the possibilities, as in Sneetches – “If I press Up on the d-pad, the pitch skips a major third. If I press right, it steps up a half-step. If I tilt the Wiimote left and right, and it goes up or down in a glissando fashion.” The above examples are simple, and show another important point: The researcher does not have to be an accomplished programmer, but must be willing to “play” the program itself, just as a performer would, to learn all the possible rules. This allows for a quantitative break down of relationships, the “if-thens” of everything from amplitude tracking to random generation.

As stated before, the score is the main artifact for reproducing performance and for analysis. But in some interactive works, the written instructions may not give all the pertinent information. As a performer, finding all the relationships becomes like a game. This was the impetus for Sneetches, an interactive environment that is, essentially, a playground, where users can interact with various objects—two electronic stations with video games and synthesizers controlled via Wiimotes, and a large kinetic sculpture—that allowed participants to play and discover relationships on their own. For Sneetches, the only written instructions were simple placards describing the basic functions of the synthesizer—Play note, change pitch, change sound, change effect—and the video game—choose game, trigger, restart—and calling for someone to help with the operation of the kinetic sculpture. In Sneetches, the score shifts from a Common Practice Era notion of a score to a combination of the programming, the visual stimuli from the video games which may influence the synthesizer participants, and the video recordings of the initial event. This runs contrary to Aphorisms where Cole’s attention to detail in the notation of the electronic part provides a fairly well rounded view of what is happening and when it occurs. Because of the attentiveness Cole put into the score, in particular pitch and rhythm during cueing or synchronous moments, the analysis of the piece traditionally, with the aid of the Pure Data patch, is plausible. Sneetches takes a more experimental approach with the lack of notation, improvised elements, and novel visual stimuli to influence movements. The analysis is then pointed in other directions, namely the interactive process, musicological analysis of the incorporation of “the playground,” and the effect of the various interactions on the final product. Also, it is possible to figure out certain pitch tendencies based upon the way a participant interacts with the device, as well as possible structures based upon the musical gesture. None of these would be easily apparent even when watching video anecdotal evidence of the display in action.

These two examples show the wide range of artifacts that can be used as

the primary source for analysis in electronic works. It also

illustrates how the idea of the power of the score as a set of

instructions can be shifted to the computer program or hardware setup

created by the composer – no longer are actions dictated specifically

by pen and paper, but instead by rules created by the composer, and not

always told specifically to the performer. This raises challenges in

analysis, but by carefully examining each piece, a researcher can

create a new “score” by combining several pieces of secondary

evidences. The goal is to find the same evidence one would find in a

traditional score, the limiting factors, the “if-thens,” and the

underlying structure that defines the content of the piece. By delving

into the programming – either by learning the language or carefully

guided performance, watching video, listening to recordings, or finding

any published materials – a researcher can move away from the passive

and static engagement with contemporary music and into the realm of the

interactive.

Clarke, Michael ;

Dufeu, Frédéric ; Manning, Peter- TIAALS: A New Generic Set

of Tools for the Interactive Aural Analysis of Electroacoustic Music

Clarke, Michael ; Dufeu, Frédéric ; Manning, Peter - TIAALS: A New Generic Set of Tools for the Interactive Aural Analysis of Electroacoustic Music

Michael Clarke ; Frédéric

Dufeu ; Peter

Manning,

University of Huddersfield ; University of Huddersfield ;

Durham University (England)

Background

This paper introduces and demonstrates TIAALS, a new set of generic

software tools designed to facilitate an interactive aural approach to

the analysis of electroacoustic music. TIAALS is

beingdevelopedasoneelementofa30-month AHRC-funded project investigating

the relationship between Technology and Creativity in Electroacoustic

Music (TaCEM: http://www.hud.ac.uk/research/

research-centres/cerenem/tacemtechnologyandcreativityinelectroacousticmusic/).

TaCEM will examine a series of Case Studies, specific works

exemplifying different technical and compositional approaches, from

contextual, technical and analytical perspectives. It builds on the

previous experience of the project team in terms of historical /

contextual study (Manning 2013), organology of computer music (Dufeu

2010) and electroacoustic analysis (Clarke 2012).

In recent years there have been an increasing number of important texts on the analysis of electroacoustic music. All have faced a common challenge, how to present analyses that exist primarily in sound, not on the page, in the form of written text and graphics. Interactive Aural Analysis (IAA) provides one approach to resolving these issues. It was first developed by Clarke for analyses of specific electroacoustic works, beginning with Jonathan Harvey’s Mortuos Plango, Vivos Voco in 2006, and later Denis Smalley’s WInd Chimes (2009) and Pierre Boulez’s Anthèmes 2 (forthcoming). The underlying principle is that analysis of such works, in which the musical development involves aspects that cannot be notated traditionally, complex textural transformations and subtle spectromorphological variations, is best undertaken and presented not solely by means of verbal and visual representations on the printed page but through the use of software permitting the analyst and the reader to engage with the musical materials interactively as sound. Technical exercises also form an important part of the IAA software, enabling readers to engage with the techniques used by the composer and discover their potential. Previously only limited attention has been paid to the possibility of modeling the techniques employed as part of analytical study, and using modern software emulation facilitates a better understanding of the technical and creative processes that have underpinned the composition process. In each analysis therefore a substantial written text accompanies software that enables the exploration of the sound world and of the techniques used to produce the music. (For more information on IAA and the earlier analyses see http://www.hud.ac.uk/research/ researchcentres/iaa// and for a fuller account of the ideas behind IAA see Clarke (2012)).

Within TaCEM, one important part of the project will be the making an IAA of each of the case studies. In preparation for this a set of generic software tools is being developed, both for use in the project and more widely by others. The tools are in many cases developed from those specifically produced for the earlier analyses but take advantage of significant new technical developments and are designed to be adaptable. Whereas with the previous IAAs the software was developed specifically for each work in question, the aim here is to create generic tools that can be of use with any piece of music as appropriate ( TIAALS does not, however, include the technical exercises which, by their very nature, are specific to the individual works and the techniques used to produce them). A Beta version of the software will be released in February 2013, this will then be refined and extended as it is trailed by members of the TaCEM team and by others.

TIAALS is being made freely available. All the tools are built in Max6. This is so that they can be fully integrated into the software we devise to accompany the presentations of our Case Studies for the TaCEM project. Being built in Max also means that the tools are easily adaptable to different contexts and extensible.

TIAALS: Tools for Interactive Aural Analysis

1. InteractiveSonogram

Sonograms are often employed in analyses of electroacoustic music. However, presented as fixed graphical representations on the printed page they often severely limited in what they can show (see Clarke 2012). TIAALS however makes use of a sonogram that is interactive and aural. It is a highly developed version of the similar tool used in the analysis of Wind Chimes. The user can draw regions on the graphic display and hear the sound of just this region. It is also possible to scrub, moving a cursor of variable frequency range at variable speed through the display and hearing the results. Regions that have been drawn onto the sonogram display can be grouped and these groups soloed or muted. These and other features of the Interactive Sonogram enable users to interact with the sonogram and explore the musical significance of the visual display. It provides a means of investigating the different components of complex textures or timbres by identifying elements in the overall texture and hearing them in isolation, and possibly in slow motion.

2. TheChartMaker

Analysis is more than simply a matter of description: it is about making connections between musical ideas and showing the evolution of musical material, sometimes across long time spans. Traditionally in analysis of acoustic music such relationships are often presented using charts, often employing musical notation. Since musical notation and other forms of graphical representation are often of limited use in electroacoustic music (see Clarke 2012), TIAALS offers a means of creating aural charts in software. This builds on the Interactive Sonogram. Regions that have been created using the Interactive Sonogram (by drawing time and frequency selections on the visual display) can be exported into a Palette. The Palette can then be used as the basis for making aural charts to demonstrate features of the music. Regions in the palette can be imported into a chart as a (labeled) button. Clicking on a button plays the region it represents (and regions in charts can be related back to their context in the work as a whole). Charts might for example be used to show the evolution of a particular type of sound or musical motif through the course of a work. Or they might be used to present a taxonomy of the sounds used in a work or a genealogical chart of the relationships between different sounds (see the Wind Chimes analysis for examples). Paradigmatic charts or other structural charts can be used to show the shape of the work. Which charts are most appropriate and how they are best presented is up to the analyst, TIAALS simply facilitates the creation of such charts, and the prioritization of aural experience and interaction with sound as the preferred means of communicating ideas about the music.

3. Pitch/FrequencyFinder

Despite the obviously greatly increased importance of timbral, textural and spatial components in much electroacoustic music, pitch continues to be a significant factor in the shaping of many works. This tool provides and aid to identifying significant pitch and frequency elements. Sections of a work can be analysed and data about the most prominent frequencies presented using both musical notation and numerical data. It is also possible to set up a pitch filter to help demonstrate the recurrence of key pitch components (e.g. harmonic fields) in the course of a passage.

4. SpatialDisplay

Spatial positioning of sound is a complex phenomenon and disaggregating

a spatial mix is not an insignificant task. This task becomes even more

complex in multichannel works. The spatial display tool in TIAALS does

not claim to resolve all these complexities and needs to be used with

intelligent reflection but it can provide some useful insights. The

spatial display tool (developed from an idea by Sam Freeman for the

Wind Chimes analysis) colour-codes each frequency bin in the sonogram

analysis according to the amplitude balance between left and right

channels. This can give some indication of the spatial distribution of

sounds across the frequency range at each moment in the work.

Summary

TIAALS is a development from earlier Interactive Aural Analyses. As

well as playing an important role in the TaCEM project, TIAALS provides

a set of generic tools that can be used by any analyst seeking a means

of working interactively with the sound of a piece in creating and

presenting their analyses. IAA is not a method of automated analysis by

computer (although we may build some automated options into later

versions); it is primarily a set of tools for an analyst to use to help

in their own interactive aural investigation of works and in the

presentation of their findings. It is envisaged that TIAALS will be

further refined and extended in response to our own needs in relation

to the TaCEM project over the next two years and in response to

feedback from other users.

j.m.clarke@hud.ac.uk ; f.dufeu@hud.ac.uk ; p.d.manning@dur.ac.uk

Coulter, John -

The Art of Multichannel Electroacoustic Composition: A Review of

Methods and Approaches

Coulter, John - The Art of Multichannel Electroacoustic Composition: A Review of Methods and Approaches

John Coulter

University of Auckland (New Zeeland)

To a number of electroacoustic composers working in New Zealand, and presumably in other parts of the world too, the three-dimensional acousmatic image seems to house the most alluring of creative possibilities – expressive musical forms that we have not yet been privileged to hear. More than 60 years have passed since the birth of electroacoustic music, yet it seems that only now we are beginning to come to terms with the complexities of composing with space. New technologies are providing a means of advancement by way of experimentation and through engagement in the creation of new work; however, there is still much debate between composers working with multichannel systems regarding the validity of such methods and approaches. Some of the most experienced and decorated composers working in New Zealand often attest to the fact that ‘there are no rules’ – that each sound carries with it an entirely new set of demands that transcends any previously established concepts or constants that may have been observed to date. While I admire this stance, I am not entirely convinced by it, and as a teacher of electroacoustic composition, I find the extreme viewpoint quite unsatisfactory.

This paper then, is an attempt to mitigate this purely subjective standpoint – to offer a review of current theories and strategies surrounding the multichannel domain that might be considered useful to the ordinary electroacoustic composer. The study is not a venture in objectifying artistic practices, rather it seeks to present a fluid set of conceptual contrivances based on a review of expert domain literature and repertoire, and discussions between experienced composers working in New Zealand that may lead to an opening-up of pragmatic possibilities concerning both the spatial treatment of individual sounds, and the spatial relationships between sounds over the duration of any given phrase or work. The investigation offers a local (New Zealand) point of view.

Regarding the method of enquiry, the study includes a comparison of key principles gleaned from the essential literature listed in the accompanying annotated bibliography, analysis of compositional techniques from the catalogue of selected repertoire, and transcriptions of group discussions between the author and leading members of New Zealand’s electroacoustic music fraternity. With reference to the last point, and further to ongoing research in multichannel electroacoustic composition on the part of the author, two meeting peri-ods have been specified: 11 May 2013, and 10 May 2014. The research group, consisting of John Coulter, John Cousins, Gerardo Dirie, John Elmsly, Dugal McKinnon, Michael Norris, and David Rylands (TBC), will meet in a specially designed facility (a 33-channel 8-metre diameter geodesic dome) located in Henderson Auckland to present creative work and to discuss concepts and techniques relevant to the title of this paper. Issues relating to localistion, proximity, and acousmatic imaging will be discussed alongside time-domain concerns such as metamorphosis of spatial image, perceptual space, and transsubjectivity. The spoken presentation at EMS13 will include a report on the discussion points raised at the first meeting.

In terns of outcomes, it is anticipated that the study will result in

an elucidation of the established guiding principals, constants and

variables surrounding the domain of multichannel electroacoustic

composition. Specifically, it is expected that groupings of actions

might be identified in response to individual creative ideas, and that

these options – relevant only to multichannel electroacoustic

composition will display specific character traits. This will be

presented as a compendium of known methods and approaches concerning

the spatialisation of individual sounds, alongside new findings with

reference to the nature and evolution of relationships between

acousmatic images. It is hoped that this information will serve the

growing fraternity of New Zealand electroacoustic composers who have

chosen to engage with multichannel technologies.

Couprie, Pierre - Analysis of interactive electroacoustic music: proposals, tools, methods

Pierre Couprie

Université Paris-Sorbonne / De Montfort University (France / England)

“Le réel n’existe plus” (Jean Baudrillard)